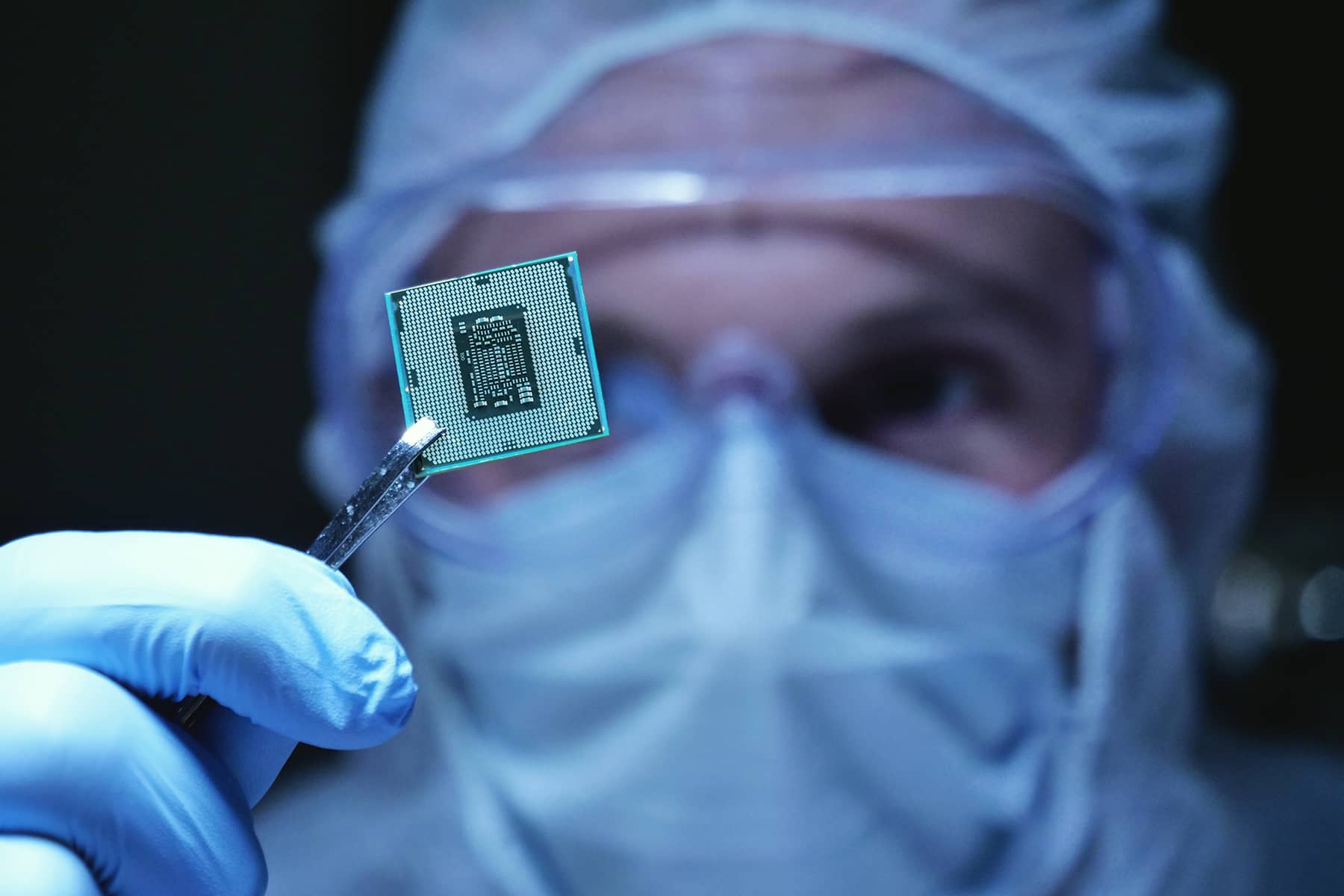

The hottest thing in technology is an unprepossessing sliver of silicon closely related to the chips that power video game graphics. It is an artificial intelligence chip, designed specifically to make building AI systems such as ChatGPT faster and cheaper.

Such chips have suddenly taken center stage in what some experts consider an AI revolution that could reshape the technology sector — and possibly the world along with it. Shares of Nvidia, the leading designer of AI chips, rocketed up almost 25% last in late May after the company forecast a huge jump in revenue that analysts said indicated soaring sales of its products. The company was briefly worth more than $1 trillion.

SO WHAT ARE AI CHIPS, ANYWAY?

That isn’t an easy question to answer. “There really isn’t a completely agreed upon definition of AI chips,” said Hannah Dohmen, a research analyst with the Center for Security and Emerging Technology.

In general, though, the term encompasses computing hardware that’s specialized to handle AI workloads — for instance, by “training” AI systems to tackle difficult problems that can choke conventional computers.

VIDEO GAME ORIGINS

Three entrepreneurs founded Nvidia in 1993 to push the boundaries of computational graphics. Within a few years, the company had developed a new chip called a graphics processing unit, or GPU, which dramatically sped up both development and play of video games by performing multiple complex graphics calculations at once.

That technique, known formally as parallel processing, would prove key to the development of both games and AI. Two graduate students at the University of Toronto used a GPU-based neural network to win a prestigious 2012 AI competition called ImageNet by identifying photo images at much lower error rates than competitors.

The win kick-started interest in AI-related parallel processing, opening a new business opportunity for Nvidia and its rivals while providing researchers powerful tools for exploring the frontiers of AI development.

MODERN AI CHIPS

Eleven years later, Nvidia is the dominant supplier of chips for building and updating AI systems. One of its recent products, the H100 GPU, packs in 80 billion transistors — about 13 million more than Apple’s latest high-end processor for its MacBook Pro laptop. Unsurprisingly, this technology isn’t cheap; at one online retailer, the H100 lists for $30,000.

Nvidia doesn’t fabricate these complex GPU chips itself, a task that would require enormous investments in new factories. Instead it relies on Asian chip foundries such as Taiwan Semiconductor Manufacturing Co. and Korea’s Samsung Electronics.

Some of the biggest customers for AI chips are cloud-computing services such as those run by Amazon and Microsoft. By renting out their AI computing power, those services make it possible for smaller companies and groups that couldn’t afford to build their own AI systems from scratch to use cloud-based tools to help with tasks that can range from drug discovery to customer management.

OTHER USES AND COMPETITION

Parallel processing has many uses outside of AI. A few years ago, for instance, Nvidia graphics cards were in short supply because cryptocurrency miners, who set up banks of computers to solve thorny mathematical problems for bitcoin rewards, had snapped up most of them. That problem faded as the cryptocurrency market collapsed in early 2022.

Analysts say Nvidia will inevitably face tougher competition. One potential rival is Advanced Micro Devices, which already faces off with Nvidia in the market for computer graphics chips. AMD has recently taken steps to bolster its own lineup of AI chips. Nvidia is based in Santa Clara, California. Co-founder Jensen Huang remains the company’s president and chief executive.

The Milwaukee School of Engineering (MSOE) opened the Diercks Computational Science Hall in 2019, made possible with a $34 million gift from MSOE alumnus Dwight Diercks and his wife Dian. A signature feature of the new facility was an NVIDIA GPU-powered supercomputer. Diercks was the senior vice president of software engineering at NVIDIA, and the gift was the largest MSOE had received from an alumnus.