There has been a serious and systemic failure to tackle antisemitism across the five biggest social media platforms, resulting in a “safe space for racists,” according to a new report.

Facebook, Twitter, Instagram, YouTube and TikTok failed to act on 84% of posts spreading anti-Jewish hatred and propaganda reported via the platforms’ official complaints system. Researchers from the Center for Countering Digital Hate (CCDH), a UK/US non-profit organization, flagged hundreds of antisemitic posts over a six-week period earlier this year. The posts, including Nazi, neo-Nazi and white supremacist content, received up to 7.3 million impressions.

Although each of the 714 posts clearly violated the platforms’ policies, fewer than one in six were removed or had the associated accounts deleted after being pointed out to moderators.

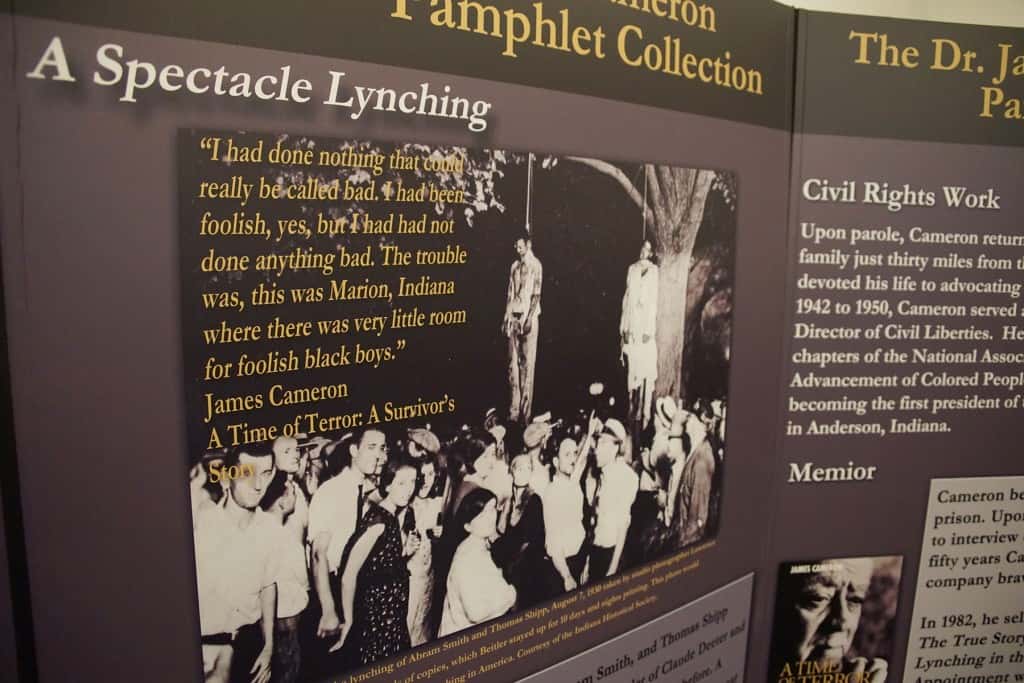

The report found that the platforms are particularly poor at acting on antisemitic conspiracy theories, including tropes about “Jewish puppeteers,” the Rothschild family and George Soros, as well as misinformation connecting Jewish people to the pandemic. Holocaust denial was also often left unchecked, with 80% of posts denying or downplaying the murder of 6 million Jews receiving no enforcement action whatsoever.

Facebook was the worst offender, acting on just 10.9% of posts, despite introducing tougher guidelines on antisemitic content last year. In November 2020, the company updated its hate speech policy to ban content that denies or distorts the Holocaust.

However, a post promoting a viral article that claimed the Holocaust was a hoax accompanied by a falsified image of the gates of Auschwitz with a white supremacist meme was not removed after researchers reported it to moderators. Instead, it was labelled as false information, which CCHD say contributed to it reaching hundreds of thousands of users. Statistics from Facebook’s own analytics tool show the article received nearly a quarter of a million likes, shares and comments across the platform.

Twitter also showed a poor rate of enforcement action, removing just 11% of posts or accounts and failing to act on hashtags such as #holohoax (often used by Holocaust deniers) or #JewWorldOrder (used to promote anti-Jewish global conspiracies). Instagram also failed to act on antisemitic hashtags, as well as videos inciting violence towards Jewish people.

YouTube acted on 21% of reported posts, while Instagram and TikTok on around 18%. On TikTok, an app popular with teenagers, antisemitism frequently takes the form of racist abuse sent directly to Jewish users as comments on their videos.

The report, entitled Failure to Protect, found that the platform did not act in three out of four cases of antisemitic comments sent to Jewish users. When TikTok did act, it more frequently removed individual comments instead of banning the users who sent them, barring accounts that sent direct antisemitic abuse in just 5% of cases.

Forty-one videos identified by researchers as containing hateful content, which have racked up a total of 3.5m views over an average of six years, remain on YouTube. The report recommends financial penalties to incentivize better moderation, with improved training and support. Platforms should also remove groups dedicated to antisemitism and ban accounts that send racist abuse directly to users.

Imran Ahmed, CEO of CCDH, said the research showed that online abuse is not about algorithms or automation, as the tech companies allowed “bigots to keep their accounts open and their hate to remain online”, even after alerting human moderators.

He said that media, which he described as “how we connect as a society”, has become a “safe space for racists” to normalize “hateful rhetoric without fear of consequences”. “This is why social media is increasingly unsafe for Jewish people, just as it is becoming for women, Black people, Muslims, LGBT people and many other groups,” he added.

Ahmed said the test of the government’s online safety bill, first drafted in 2019 and introduced to parliament in May, is whether platforms can be made to enforce their own rules or face consequences themselves.

“While we have made progress in fighting antisemitism on Facebook, our work is never done,” said Dani Lever, a Facebook spokesperson. Lever told the New York Times that the prevalence of hate speech on the platform was decreasing, and she said that “given the alarming rise in antisemitism around the world, we have and will continue to take significant action through our policies.”

A Twitter spokesperson said the company condemned antisemitism and was working to make the platform a safer place for online engagement. “We recognize that there’s more to do, and we’ll continue to listen and integrate stakeholders’ feedback in these ongoing efforts,” the spokesperson said.

TikTok said in a statement that it condemns antisemitism and does not tolerate hate speech, and proactively removes accounts and content that violate its policies. “We are adamant about continually improving how we protect our community,” the company said.

YouTube said in a statement that it had “made significant progress” in removing hate speech over the last few years. “This work is ongoing and we appreciate this feedback,” said a YouTube spokesperson.

Mаyа Wоlfе-Rоbіnsоn

Portions originally published on The Guardian as A ‘safe space for racists’: antisemitism report criticises social media giants

Help deliver the independent journalism that the world needs, make a contribution of support to The Guardian.